Datasets collection

This work uses the Netflix Animation Library ( as the data source. The dataset is a high-quality collection of animated works widely used in animation research and development. It contains over 500 animated works, spanning from early 20th-century classics to early 21st-century CGI animations. The diversity of the dataset is reflected in several aspects. First, it includes a rich variety of animation styles, such as 2D, 3D, and stop-motion animation; then, it covers a wide range of thematic categories, including sci-fi, fantasy, and adventure; additionally, the dataset also contains extensive metadata, such as information on directors, screenwriters, production companies, release years, and audience ratings. These metadata provide multidimensional feature support for training DL models. The representativeness of the dataset lies in its inclusion of animated works from different periods, types, and styles, effectively reflecting the diversity and development trends in the animation field. It provides a solid foundation for training and validating artistic creativity evaluation models.

In the data preprocessing stage, the image data are first standardized. This is achieved by adjusting the image size to a uniform resolution (such as 512 × 512 pixels) and normalizing the pixel values to the range of [0, 1] to eliminate scale differences between images. Metadata are encoded and normalized, such as converting categorical information such as directors and production companies into one-hot encoding, and standardizing numerical information such as release year and audience ratings. Additionally, to enhance the model’s generalization capability, data augmentation techniques, such as random cropping, rotation, and color adjustment, are applied during training to generate more diverse training samples. Ultimately, the dataset is divided into a training set (80%) and a test set (20%), providing a solid foundation for DL model training and validation. Through these preprocessing steps, the Netflix Animation Library not only supports the development of the artistic creativity evaluation model but also offers valuable data support for animation education and industry applications. Subsequently, these data are input into the fused BPNN and StyleGAN model for training. The BPNN learns the mapping relationship between the features of the artworks and evaluation results from the structured data. The StyleGAN generates high-quality artistic creative images, providing rich visual features for the evaluation model. Through this fusion, the model can comprehensively consider both the structural and visual characteristics of the artworks, enabling precise classification and evaluation of artistic creativity.

Experimental environment

In order to validate the constructed algorithm, development mainly takes place on a Windows computer. Table 2 provides specific environment configuration information.

Parameters setting

The specific hyperparameter settings are as follows. Adam optimizer is used as the optimizer to minimize the loss function. The number of epochs is set to 120, with a learning rate (LR) of 0.001. A decay strategy is employed where the learning rate is reduced by 50% when the loss indicator stops improving. During the training process, a Dropout layer with a dropout value of 0.5 is added between the input and output layers. The batch size is set to 64.

Performance evaluation

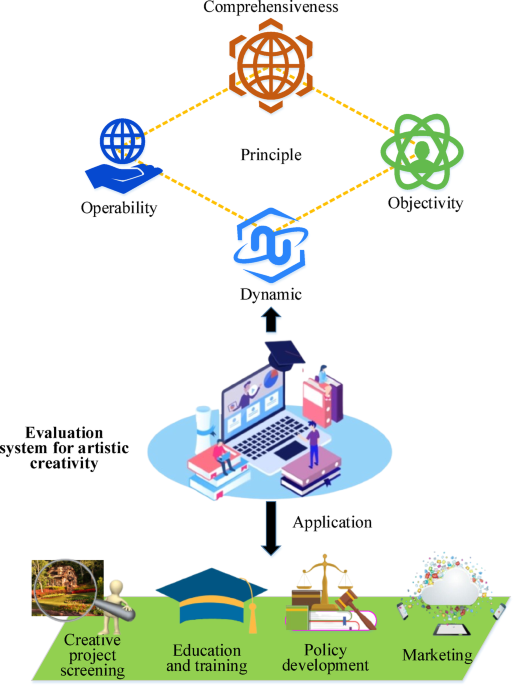

In order to analyze the performance of the model proposed, it is compared with GAN46, StyleGAN, BPNN47, and the model algorithm proposed by Jin et al. in the related field. The evaluation is conducted based on indicators such as loss function value, fitting effect, accuracy, precision, recall, and F1 score. The loss function value reflects the error convergence of the model during the training process. A lower loss value indicates that the model fits the training data better. The fitting performance is measured by comparing the consistency in trends between the model’s predicted values and the actual values. A good fit means the model can accurately capture the patterns in evaluating the innovativeness of artworks. Accuracy represents the proportion of correctly predicted values, reflecting the overall accuracy of the evaluation. Precision focuses on the proportion of actual innovative works among those predicted as innovative by the model, measuring the model’s conservatism. Recall reflects the proportion of innovative works that the model is able to identify, demonstrating the model’s sensitivity. The F1 score is the harmonic mean of precision and recall, providing a comprehensive measure of the model’s performance balance. Through the combined evaluation of these metrics, the proposed model demonstrates superior performance in artistic creativity evaluation, and offers reliable technical support for innovation and entrepreneurship assessments.

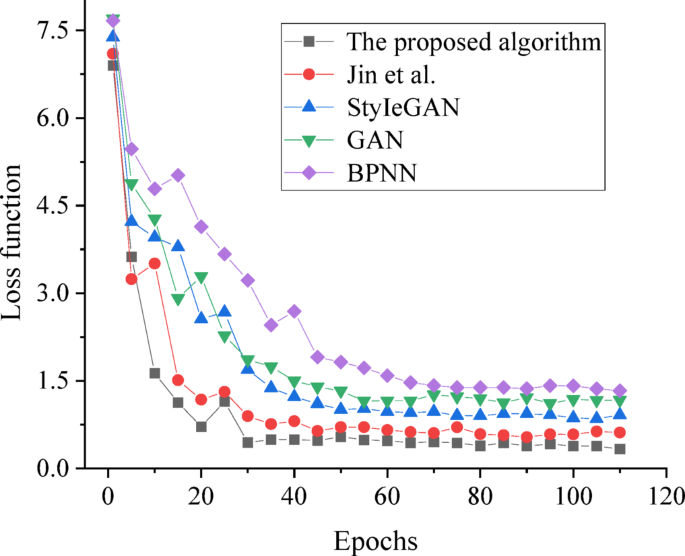

Figure 4 displays the comparison of the loss function values of each model algorithm.

Convergence results of models under different algorithms.

In Fig. 4, through the analysis of the loss values of each algorithm, it can be observed that the proposed BPNN fused with the StyleGAN model algorithm achieves the minimum loss value. Specifically, the loss value of the model algorithm proposed reaches a basic stable state at around 0.38 after 30 iterations, while the final loss functions of other algorithms all exceed 0.62. Therefore, in the evaluation of artistic creativity and innovation, the proposed innovative evaluation model demonstrates superior convergence performance, enabling intelligent innovation evaluation of artistic creative works.

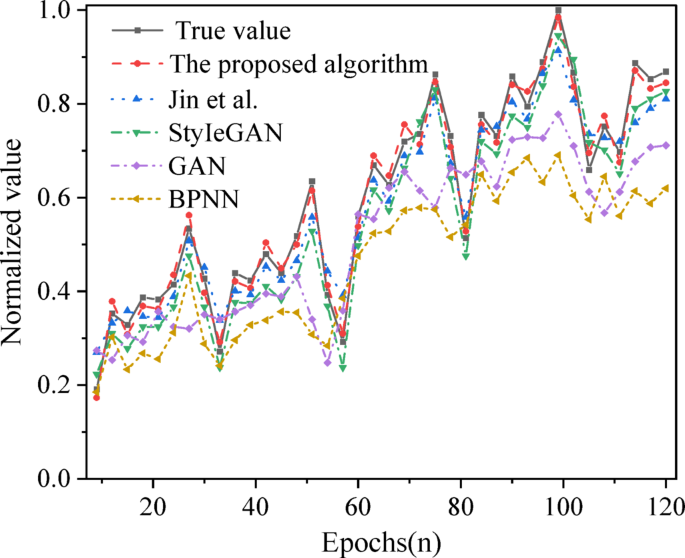

Figure 5 presents an analysis of the fitting effect of the proposed algorithm compared with other models and the actual value.

Normalized fitting results of various algorithms with real values.

Figure 5 reveals that, concerning the fitting effect, the algorithm proposed closely follows the trend of the real values and achieves better predictions for the normalized peak and trough values. Furthermore, comparing the prediction results of other model algorithms with the real values reveals that their predicted results significantly deviate from the peak and trough values of the real values.

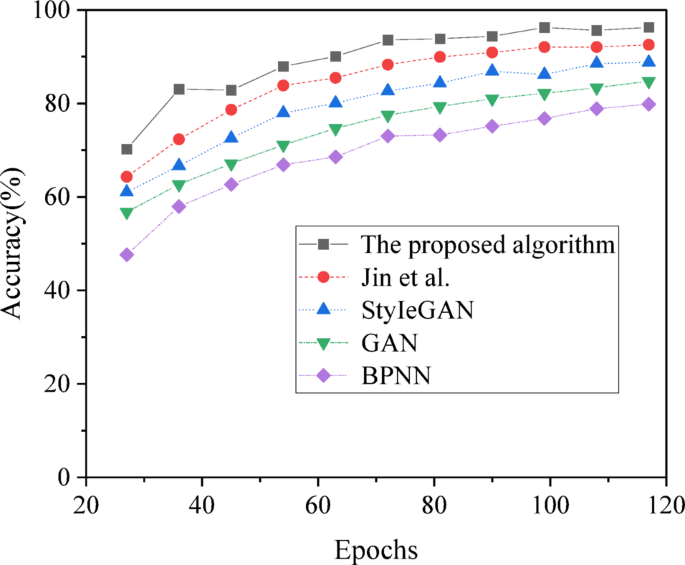

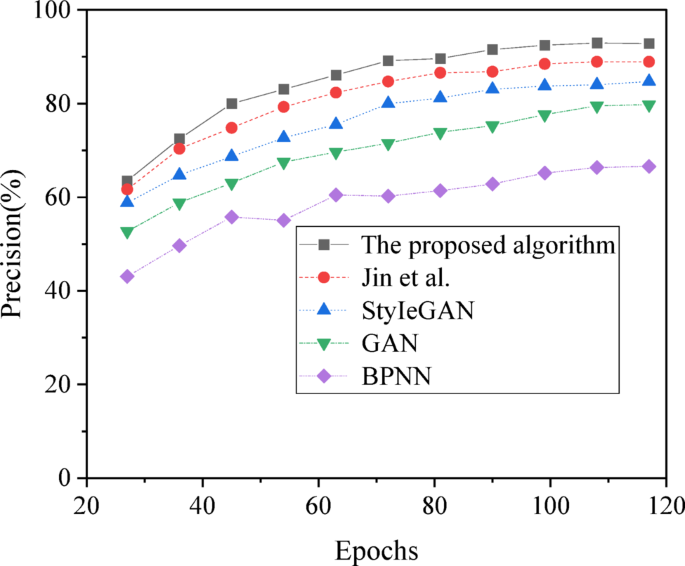

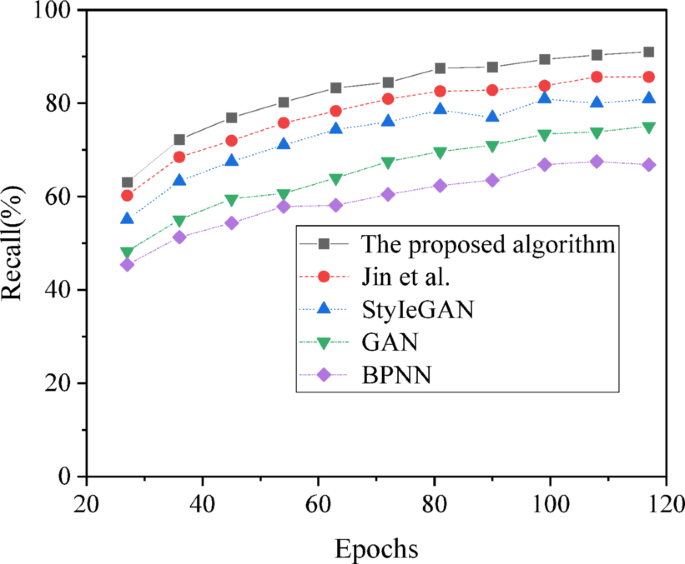

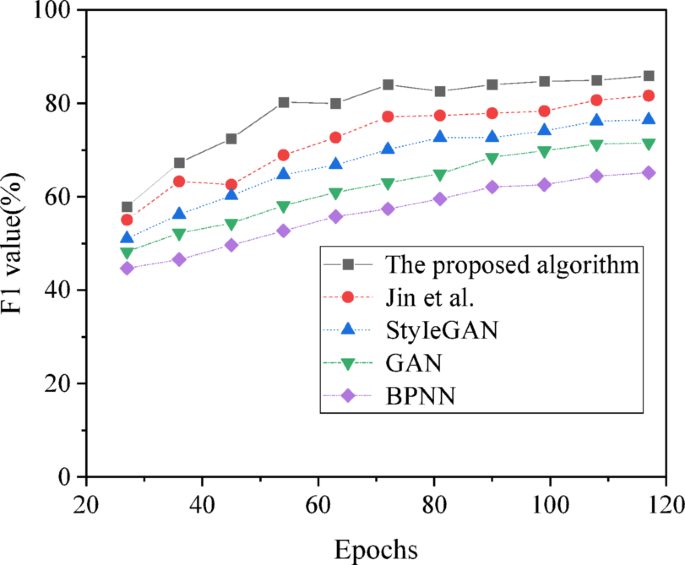

Figures 6, 7, 8 and 9 display the further analysis of the predictive accuracy of innovation evaluation based on the indicators of accuracy, precision, recall, and F1 score for each algorithm.

Accuracy results of innovation evaluation prediction for artistic creative images under various algorithms.

Precision results of innovation evaluation prediction for artistic creative images under various algorithms.

Recall results of innovation evaluation prediction for artistic creative images under various algorithms.

F1 value results of innovation evaluation prediction for artistic creative images under various algorithms.

Further analysis of the prediction accuracy of each algorithm, as shown in Figs. 6, 7, 8 and 9, reveals that with the increase in iteration cycles, the evaluation accuracy of each algorithm tends to increase first and then stabilize. Compared with GAN, StyleGAN, BPNN, and the model proposed by Jin et al. in the relevant field, the accuracy of the model proposed reaches 96.30%, an increase of at least 3.75%. The rankings of the algorithms are as follows: the model proposed > the algorithm proposed by Jin et al. > StyleGAN > GAN > BPNN. In addition, the precision, recall, and F1 values of the model reach 92.82%, 91.06%, and 85.88%, respectively, representing an improvement of at least about 4% in evaluation accuracy compared to other algorithms. Therefore, the artistic creative innovation evaluation model based on BPNN fused with StyleGAN proposed exhibits better performance in prediction accuracy for innovation and entrepreneurship evaluation.

To validate the model’s generalization ability, experiments are conducted on multiple datasets, including the original Netflix Animation Library dataset and two additional datasets. The first is the Annecy International Animation Film Festival (AIAFF) dataset ( It is one of the most significant animation film festivals in the world, whose official website provides information on some of the award-winning and participating works. The second is the Sundance Film Festival (SFF) Animation section dataset ( which covers a wide variety of animated works and offers abundant resources for the collection. Table 3 presents the results.

Table 3 shows that the model performs best on the Netflix Animation Library dataset, achieving an accuracy of 96.30%, a precision of 92.82%, a recall of 91.06%, and an F1 score of 85.88%. This indicates that the model can efficiently identify and assess the innovativeness of artworks in this dataset. However, when applied to the AIAFF dataset, the model’s performance slightly declines, with accuracy at 94.85%, precision at 91.50%, recall at 89.70%, and an F1 score of 85.20%. This result reflects that while the model still maintains a high level of accuracy and reliability in handling animation works from different regions and styles, it is somewhat influenced by cultural diversity and stylistic differences. Further, on the SFF dataset, the model’s accuracy drops to 93.20%, precision is 89.30%, recall is 88.50%, and the F1 score is 83.90%. This suggests that while the model still performs well on animation artworks from various cultural backgrounds, its generalization ability faces challenges when confronted with a broader range of artistic forms and cultural differences. Overall, the model’s performance across these three datasets demonstrates its effectiveness in evaluating artistic creativity and some degree of generalization ability. However, it also highlights the need for further optimization in cross-cultural scenarios to improve its applicability and accuracy across a wider spectrum of animation art.

To more intuitively demonstrate the evaluation process and results of the proposed assessment system for specific animated works, three stylistically distinct animated films, Spirited Away, Toy Story, and Big Fish & Begonia, are selected for case analysis, as shown in Table 4. These works have a broad influence in the animation field and effectively showcase the system’s evaluation capabilities.

In Table 4, through the evaluation of the three animated works mentioned above, the system can provide detailed and targeted scores and feedback on the works from three dimensions: innovation, unique style, and market potential. Taking Spirited Away as an example, the system gives it a score of 95 for innovation, and recognizes its high level of innovation in narrative structure and visual style. In particular, its innovative aspects, such as its unique spiritual worldview and delicate use of color, add unique artistic value to the work. Meanwhile, the system points out that there is still room for further optimization in market promotion, such as strengthening international market expansion and brand development to better realize its commercial value.

Discussion

Based on the research findings above, it is observed that the model proposed is compared with GAN, StyleGAN, BPNN, and the model proposed by Jin et al. in terms of performance analysis. The results indicate that the proposed model performs best in terms of loss function values, exhibiting faster convergence and minimal loss values, showcasing superior convergence effects, and better prediction of peak and valley values after normalization. This is consistent with the views of Zhou et al.48 and Çiçek et al.49. Further analysis of its predictive performance reveals that the proposed model’s accuracy is significantly better than other models, achieving an evaluation prediction of 96.30%. Therefore, the artistic innovation evaluation model based on BPNN fused with StyleGAN proposed performs better in predicting innovation and entrepreneurship evaluation accuracy. This is consistent with the views of Qi et al.50. This model is expected to become an important tool for innovation evaluation in the field of artistic creativity. It can provide intelligent and accurate evaluation services for artistic creators, while also providing strong support for the development of the creative industry.

In art education environments, traditional methods for assessing artistic creativity primarily rely on teachers’ subjective judgments, lacking objective quantitative indicators. For example, teachers typically evaluate students’ creativity by observing their creative processes, the completeness of their works, and interactions with students. However, this approach has notable limitations: assessment results are highly subjective, making cross-class or cross-school comparisons difficult, and it struggles to accurately reflect the innovativeness and market potential of students’ works. Additionally, traditional methods often lack in-depth analysis of multi-dimensional characteristics of student works, such as stylistic uniqueness, innovative technical applications, and the depth of cultural connotations. These limitations not only affect the quality of art education but also restrict the comprehensive development of students’ creativity. To address these challenges, recent studies have explored applying AI and deep learning technologies to artistic creativity assessment. For instance, Sung et al. developed a deep learning-based computerized creativity assessment tool capable of automated scoring, enhancing objectivity and efficiency. However, most of these studies focus on technology development and validation, lacking close integration with the practical needs of art education. In art education, an assessment system must not only provide objective scores but also offer specific feedback and improvement suggestions for students and teachers to foster the development of students’ creativity.

The proposed potential application of the artistic creativity assessment system within educational environments is rooted in a profound understanding of the limitations of traditional assessment methods and innovative improvements to existing technologies. By leveraging deep learning and AI, the system can automatically extract key features of artistic works and evaluate them across multiple dimensions, including innovativeness, unique style, and market potential. Compared with traditional methods, the system not only provides more objective and quantifiable assessment results but also offers real-time feedback and targeted improvement suggestions for students and teachers. For example, in the “Animation Creative Concepts” course, students’ submitted creative sketches can be quickly scored for innovativeness through the system. Meanwhile, the system analyzes the stylistic characteristics and market potential of the works and provides specific improvement recommendations. This real-time feedback mechanism helps students better understand the strengths and weaknesses of their works, enabling them to make targeted improvements and enhance their creativity and practical capabilities.

From the perspective of the system’s applicability to future creative works, a dynamic data update mechanism can be established to periodically collect the latest artworks and creative cases, ensuring the system remains timely and adaptable. Additionally, by incorporating cross-domain data fusion, such as including data from painting, sculpture, architectural design, and other fields in the model’s training, the system’s generalization ability can be enhanced. Moreover, through modular design, the model can flexibly adapt to different types and styles of artworks, and improve its ability to evaluate future creative works. These measures will ensure the system can better support the evaluation of creative works that transcend existing values and norms.

Although the model proposed demonstrates excellent technical performance, its interpretability remains a key issue that needs to be addressed. To help users better understand and trust the model’s evaluation results, this work has conducted a deep analysis of the model’s decision-making process. Specifically, through feature importance analysis and attention mechanism visualization, it highlights the specific features the model focuses on when evaluating artworks. For example, feature importance analysis reveals the extent to which the model relies on characteristics such as color contrast, composition complexity, and style uniqueness. All of these features play a critical role in the model’s final evaluation. Additionally, attention mechanism visualization allows for a direct and intuitive display of the areas the model focuses on when processing images of artworks. For instance, in an animated work, the model may focus more on the details of the character’s expression, the color layers in the background, or the dynamic effects of the overall scene. These analyses not only provide users with an intuitive understanding of the model’s decision logic but also offer insights for further optimization of the model. This makes it more persuasive and practical in the highly subjective field of artistic creativity evaluation.

To better showcase the system’s strengths and limitations, a review of previous research on creativity or art evaluation systems has been added, particularly the work by Jin et al. Through comparative analysis, it becomes evident that the proposed system holds advantages regarding technological innovation and application effectiveness. However, it also shows certain limitations, such as its dependence on the dataset and the constraints in defining innovation. Additionally, an analysis of the system’s applicability reveals that the system is not limited to animation programs but can be extended to other art and design fields, such as graphic design and illustration. Specific teaching application suggestions are proposed for different programs based on their unique characteristics. Moreover, based on the system’s features and limitations mentioned above, directions for future improvements are outlined, such as incorporating more advanced DL algorithms to enhance the model’s ability to understand complex creative works.

Finally, to ensure that the system built can be applied to concrete educational cases and provide related effect evaluations, this work plans to develop a series of teaching cases based on the model, and cover both foundational and advanced course content. For example, in a course like “Animation Creative Concepts,” students can submit creative sketches through the system, and the system will provide innovation evaluations and improvement suggestions. In an “Animation Project Practice” course, students can use the system to conduct a comprehensive evaluation of their full animation works, optimizing their market potential. Additionally, through actual teaching applications, feedback from students and teachers will be collected to assess the system’s teaching effectiveness. Representative student works will be selected to showcase the improvement process and effects before and after using the system. Through comparative analysis, the system’s impact on enhancing the innovation and market potential of student works will be visually demonstrated, thereby strengthening the argument of the research work. However, how the system provides feedback to learners, and whether this feedback positively influences their understanding of creativity and innovation, remains an issue for further exploration. Additionally, since the system has not yet been applied in an educational environment, the discussion of its educational effectiveness is currently in the speculative stage. Future research will need to validate the system’s practical value in educational settings through specific teaching cases and comparative experiments, such as analyzing changes in student learning outcomes after using the system, and their increased interest in the integration of AI with art.

In today’s highly competitive business environment, assessing artistic creativity is crucial for identifying and nurturing creative projects with market potential. Traditional evaluation methods often rely on subjective judgment and lack quantitative indicators, making it difficult to accurately reflect the innovation and commercial value of artistic works. The artistic creativity evaluation model based on the integration of BPNN and StyleGAN proposed provides new technological means and theoretical support for evaluating artistic creativity in the business context. The model automatically extracts features of artistic works using DL techniques and combines high-quality visual features generated by StyleGAN. This enables a comprehensive and objective evaluation of artworks from multiple dimensions, such as innovation, unique style, and market potential. Its high accuracy (96.30%) and excellent predictive performance indicate that the model can effectively identify creative works with commercial potential, providing scientific decision-making support for investors, art curators, educators, and policymakers. Additionally, the model’s dynamic and operable nature allows it to adapt to the constantly changing cultural, technological, and market environments, and provide strong technical support for the application and promotion of artistic creativity in the business field. By integrating artistic creativity with commercial value, this work advances the development of artistic creativity assessment systems. Moreover, it offers new ideas and methods for the innovation and sustainable development of the creative industry.

The artistic creativity evaluation system platform developed has not yet been open-sourced. The main reason is that the system is still in the optimization phase, and some features need further improvement to ensure its stability and accuracy in different scenarios. Additionally, considering that some of the technologies involved in the system may be subject to patent applications, it is not yet appropriate to release it as open source. Furthermore, to further promote the application of the art creativity evaluation system proposed in education and other fields, this work plans to develop an online demonstration platform to visually showcase the system’s functions and effectiveness. This platform will reference existing open creativity scoring systems (Open Creativity Scoring: and provide transparent evaluation mechanisms along with real-world application examples to enhance users’ understanding and trust in the system. The platform will allow users to upload artwork and receive real-time innovation evaluation results from the system. It can showcase how the model focuses on specific features of the artwork (such as color, composition, and style) and visualize the decision-making process. The AuDra system quantifies the innovativeness and artistic value of creative paintings by analyzing visual features such as color, composition, and brushstrokes, combined with machine learning algorithms, thus providing objective evaluation indicators for painting creativity51. Compared with AuDra, this work’s system not only achieves technological breakthroughs but also uses deep learning and AI to offer more precise and objective quantitative indicators for multi-dimensional assessment of artistic creativity, significantly outperforming traditional methods in accuracy and efficiency. While the AuDra system primarily focuses on evaluating creative paintings, the system in this work covers a broader range of art forms, such as animation and graphic design, demonstrating wider applicability. Additionally, the platform will offer practical application cases, and demonstrate the system’s effectiveness in educational settings, such as the improvement process of student artworks and teaching feedback from educators. By incorporating user feedback mechanisms, this work aims to continuously optimize the system’s performance and user experience. The development of this demonstration platform will help showcase the system’s practical application value and provide an innovative tool for art education and the creative industry, promoting the widespread adoption of art creativity evaluation technologies. The goal is to protect intellectual property while also promoting academic exchange and technological sharing through this approach.

link

More Stories

Local entrepreneur being inducted into business hall of fame | West Prince Graphic

Global hub for technology and entrepreneurship

Michelle “Meme” Lovett Recognized for Entrepreneurship, Media Leadership, and Community Impact